Abstract

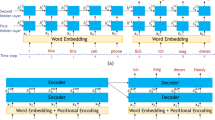

Spatiotemporal activity of neurons is ubiquitous in sensory coding in the CNS. It is a fundamental problem for sensory perception to understand how sensory information is decoded from the spatiotemporal activity. However, little is known about the decoding mechanism. To address this issue, we are concerned with auditory system as a model system exhibiting spatiotemporal activity. We present here a model of auditory cortex, which performs a hierarchical processing of auditory information. The model consists of three layers of two-dimensional networks. The first layer represents auditory stimulus as a spatiotemporal activity of neurons. The second layer consists of feature-detecting neurons, which extract the features of phonemes and their overlaps from the spatiotemporal activity of the first layer. The third layer combines information of the sound features encoded by the second layer and decodes word information about the sound stimulus as a temporal sequence of attractors. Using the model, we show how the information of phonemes and words emerge in the hierarchical processing of the auditory cortex. We also show that the overlap between phonemes plays a crucial role in linking the attractors of phonemes. The present study may provide a clue for understanding the mechanism by which word information is decoded from spatiotemporal activity of neurons.

Similar content being viewed by others

References

Ahissar E, Zacksenhouse M. Temporal and spatial coding in the rat vibrissal system. Prog Brain Res. 2001;130:75–87.

Barak O, Tsodyks M. Recognition by variance: learning rules for spatiotemporal patterns. Neural Comput. 2006;18:2343–58.

Bregman AS, Campbell J. Primary auditory stream segregation and perception of order in rapid sequences of tones. J Exp Psychol. 1971;89:244–9.

Bregman AS. Auditory scene analysis: the perceptual organization of sound. Cambridge: A Bradford Book; 1994.

Buonomano DV, Merzenich MM. Temporal information transformed into a spatial code by a neural network with realistic properties. Science. 1995;267:1028–30.

Chang EF, Rieger JW, Johnson K, Berger MS, Barbaro NM, Knight RT. Categorical speech representation in human superior temporal gyrus. Nat Neurosci. 2010;13:1428–32.

Dahmen JC, Hartley DEH, King AJ. Stimulus-timing-dependent plasticity of cortical frequency representation. J Neurosci. 2008;28:13629–39.

DeAngelis GC, Ohzawa I, Freeman RD. Spatiotemporal organization of simple-cell receptive fields in the cat’s striate cortex II linearity of temporal and spatial summation. J Neurophysiol. 1993;69:1118–35.

deCharms R, Blake D, Merzenich M. Optimizing sound features for cortical neurons. Science. 1998;280:1439–43.

DeWitt I, Rauschecker JP. Phoneme and word recognition in the auditory ventral stream. Proc Natl Acad Sci USA. 2012;109:E505–14.

Drullman R. Temporal envelope and fine structure cues for speech intelligibility. J Acoust Soc Am. 1995;97:585–92.

Fishman YI, Resera DH, Arezzoa JC, Steinschneidera M. Neural correlates of auditory stream segregation in primary auditory cortex of the awake monkey. Hear Res. 2001;151:167–87.

Freiwald WA, Tsao DY. Functional compartmentalization and viewpoint generalization within the macaque face-processing system. Science. 2010;330:845–51.

Fowler CA. Segmentation of coarticulated speech in perception. Percept Psychophys. 1984;36:359–68.

Fujita K, Kashimori Y, Kambara T. Spatiotemporal burst coding for extracting features of spatiotemporally varying stimuli. Biol Cybern. 2007;97:293–305.

Fukunishi K, Murai N, Uno H. Dynamic characteristics of the auditory cortex of guinea pigs observed with multichannel optical recording. Biol Cybern. 1992;67:501–9.

Fukunishi K, Murai N. Temporal coding in the guinea-pig auditory cortex as revealed by optical imaging and its pattern-time-series analysis. Biol Cybern. 1995;72:463–73.

Gerstner W, Kempter R, Hemmen JL, Wagner H. A neuronal learning rule for sub-millisecond temporal coding. Nature. 1996;383:76–81.

Ghitza O. Linking speech perception and neurophysiology: speech decoding guided by cascaded oscillators locked to the input rhythm. Front Psychol. 2011;2:130.

Horikawa J, Hosokawa Y, Kubota M, Nasu M, Taniguchi I. Optical imaging of spatiotemporal patterns of glutamatergic excitation and GABAergic inhibition in the guinea-pig auditory cortex in vivo. J Physiol. 1996;497:629–38.

Izhikevich EM. Solving the distal reward problem through linkage of STDP and dopamine signaling. Cereb Cortex. 2007;17:2443–52.

Jaeger H, Haas H. Harnessing nonlinearity: predicting chaotic systems and saving energy in wireless communication. Science. 2004;304:78–80.

Karmarkar UR, Najarian MT, Buonomano DV. Mechanisms and significance of spike-timing dependent plasticity. Biol Cybern. 2002;87:373–382.

Knüsel P, Wyss R, König P, Verschure PFMJ. Decoding a temporal population code. Neural Comput. 2004;16:2079–2100.

Koch C. Biophysics of computation: information processing in single neurons (computational neuroscience). 1st ed. Oxford: Oxford University Press; 1998.

Kohonen T. Self-organizing maps. 3rd ed. Berlin: Springer; 2001.

Laurent G. Olfactory network dynamics and the coding of multidimensional signals. Nat Rev Neurosci. 2002;3:884–95.

Legenstein R, Pecevski D, Maass W. A learning theory for reward-modulated spike-timing-dependent plasticity with application to biofeedback. PLoS Comput Biol. 2008;4:e1000180.

Liberman AM. The grammars of speech and language. Cogn Psychol. 1970;1:301–23.

Maass W, Natschläger T, Markram H. Real-time computing without stable states: a new framework for neural computation based on perturbations. Neural Comput. 2002;14:2531–60.

Mauk MD, Buonomano DV. The neural basis of temporal processing. Annu Rev Neurosci. 2004;27:307–40.

Mazor O, Laurent G. Transient dynamics versus fixed points in odor representations by locust antennal lobe projection neurons. Neuron. 2005;48:661–73.

McClelland JL, Elman JL. The TRACE model of speech perception. Cogn Psychol. 1986;18:1–86.

Nikolic D, Häusler S, Singer W, Maass W. Temporal dynamics of information content carried by neurons in the primary visual cortex. In: Schölkopf B, Platt JC, Hoffman T, editors. Advances in neural information processing systems, vol 19. Vancouver: MIT Press; 2007. p.1041–8.

Rao RPN, Ballard DH. Predictive coding in the visual cortex: a functional interpretation of some extra-classical receptive-field effects. Nat Neurosci. 1999;2:79–87.

Riesenhuber M, Poggio T. Models of object recognition. Nat Neurosci. 1999;3:1199–203.

Schnupp JWH, Hall TM, Kokelaar RF, Ahmed B. Plasticity of temporal pattern codes for vocalization stimuli in primary auditory cortex. J Neurosci. 2006;26:4785–95.

Schnupp J, Nelken I, King A. Auditory neuroscience: making sense of sound. Cambridge: The MIT Press; 2011.

Shannon RV, Zeng F-G, Kamath V, Wygonski J, Ekelid M. Speech recognition with primarily temporal cues. Science. 1995;270:303–4.

Song S, Miller KD, Abbott LF. Competitive Hebbian learning through spike-timing-dependent synaptic plasticity. Nat Neurosci. 2000;3:919–26.

Sprekeler H, Michaelis C, Wiskott L. Slowness: an objective for spike-timing-dependent plasticity? PLoS Comput Biol. 2007;3:e112.

Stevens KN. Toward a model for lexical access based on acoustic landmarks and distinctive features. J Acoust Soc Am. 2002;111:1872–91.

Tanaka K. Inferotemporal cortex and object vision. Annu Rev Neurosci. 1996;19:109–39.

Taniguchi I, Horikawa J, Moriyama T, Nasu M. Spatio-temporal pattern of frequency representation in the auditory cortex of guinea pigs. Neurosci Lett. 1992;146:37–40.

Taniguchi I, Nasu M. Spatio-temporal representation of sound intensity in the guinea pig auditory cortex observed by optical recording. Neurosci Lett. 1993;151:178–81.

Theunissen FE, Sen K, Doupe AJ. Spectral-temporal receptive fields of nonlinear auditory neurons obtained using natural sounds. J Neurosci. 2000;20:2315–31.

Tzounopoulos T, Kim Y, Oertel D, Trussell LO. Cell-specific, spike timing-dependent plasticities in the dorsal cochlear nucleus. Nat Neurosci. 2004;7:719–25.

Yamaguchi Y, Horikawa J, Taniguchi I. Neural dynamics of vocal processing in the auditory cortex. In: Poznanski RR, editor. Biophysical neural networks. New York: Mary Ann Liebert; 2001. p. 343–62.

Author information

Authors and Affiliations

Corresponding author

Appendix: Model of FR Layer

Appendix: Model of FR Layer

As the model of the FR layer, we used a simple network model of wave propagation in the auditory cortex, proposed by Yamaguchi et al. [48]. The network model of the FR layer consists of a two-dimensional array of population units as shown in Fig. 1b. The unit consists of a pair of excitatory and inhibitory neuron as shown in Fig. 1c. The network model has the two axes, one is the tonotopical axis representing frequency selectivity of input, or T-axis, and the other is propagation axis, or P-axis, along which excitatory wave is propagated. Each population unit is numbered by ij, where i and j, respectively, denote the position on T- and P-axis (i = 1 − N T and j = 1 − N P ). The time evolution of the excitatory and inhibitory neurons in (i, j)th unit is given by the following equations:

where x ij and y ij are the internal variables of excitatory and inhibitory neuron in (i, j)th unit, τ E and τ I are the time constants, and \(\alpha, \beta, \kappa, \Upgamma\), and β 0 are constant parameters. The output function P is given by

where λ z is the gradient parameter, and μ z is the threshold value.

The external input to jth neurons on the ith tonotopy axis is given by δ j I i . The factor δ j equals 1 if j = 1, and 0 otherwise, and the term I i is the magnitude of the input to ith neuron along T-axis.

The synaptic weight from (m, n)th neuron to (i, j)th neuron in the FR layer, w ijmn is given by

where w 0 gives the synaptic weight in the absence of external input. The inputs I i and I m increase the synaptic weights of the connections between ith and mth frequency bands, and W 0 is a constant parameter. Thus, neurons in the ith frequency band are characterized by the common input I i . It gives the excitatory inputs at the left edge of P-axis, while neurons in other columns receive no direct input but input elicited by instantaneous synaptic modulation.

The last term of the righthand side of Eq. (8) indicates the lateral inhibitions between neighboring neurons, given by

The range of competition is represented by Q = {q| q = i − 1, i + 1}, and h is the magnitudes of the connections.

The parameter values used are as follows: \(\tau_E=30\,\hbox{ms}, \tau_I=30\,\hbox{ms}, \alpha=8.0, \beta=8.0, \beta_0=1.0, \kappa=4.0, \Upgamma=1.6, h=0.025, W_0=2.0, w_0=0.1, \lambda_z=9.0, \mu_z=2.0, I_i=1.0, I_m=1.0, N_P=50\), and N T = 20.

Rights and permissions

About this article

Cite this article

Fujita, K., Hara, Y., Suzukawa, Y. et al. Decoding Word Information from Spatiotemporal Activity of Sensory Neurons. Cogn Comput 6, 145–157 (2014). https://doi.org/10.1007/s12559-013-9240-1

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12559-013-9240-1